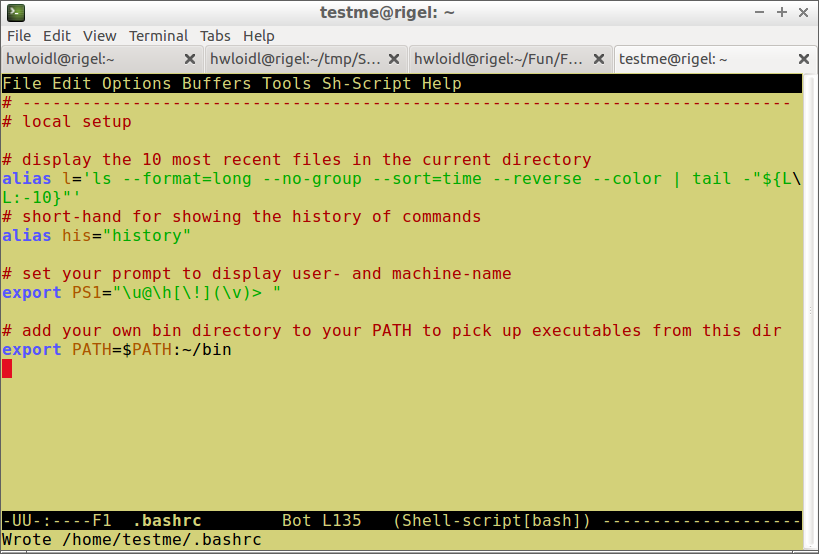

This is useful to remember as it's really easy to forget to close files, and I generally find it cleaner than just relying on the file object's destructor to do the work.įinally, if you need every single write() operation to go straight to the disk, consider using os.open() and os.write() instead, which give you access to the raw OS file descriptor. This will automatically close the file again after the with block has finished. With open ( "testfile.txt", "w" ) as testfile1 : testfile1. If you need to force all the buffered data to be written to the disk right now then you can use the flush() method on the file: So, if you view it from another process before the data has been pushed out to disk then it might look empty. This is done for performance reasons - if you perform multiple write() calls then they can all be stacked up in memory, which is fast, and then sent out to disk, which is slow, all in one go. That means when you perform a write() call like that, the data hasn't necessarily actually gone into the underlying file - it's sitting in memory. To answer your actual question, are you writing the file in one console and then reading it in another? If so, be aware that Python (and potentially the underlying libc library) performs buffered IO.

BASH TEST FOR ZERO BYTE FILE CODE

In future please try to paste the exact code you're running - in this case it was fairly easy to figure out what you meant, but sometimes the precise details of what you're doing can be critical in working out the problem.

Testfile1 = open ( 'testfile1.txt', 'w' ) testfile1. Personally I've also observed a short delay in updates here (something like 10-15 seconds) so I would recommend opening a bash shell to view your files using the cat command.įirstly, the code snippet above isn't opening a file - you're just assigning a 2-tuple to testfile1 and then calling the write() method on it, which will fail with the error "tuple object has no attribute write()". I also note that you're using the Dashboard -> Files tab to view your file. The PA admins did recently make some changes which should improve performance a little, but Dropbox itself is by no means instant. This is because Dropbox does background synchronisation as opposed to being a proper networked filesystem.

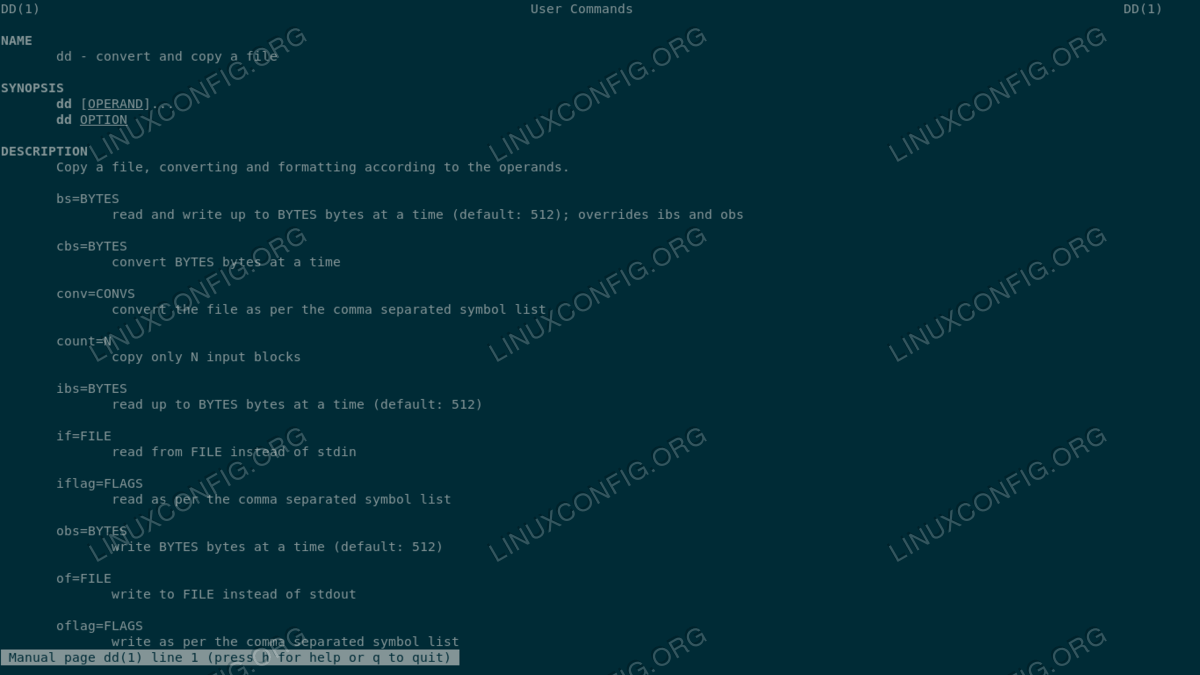

I wouldn't expect it to make much difference if both your processes are running on PA, but if you're creating a file on PA and checking it on another host which has the same Dropbox share then you can expect a significant delay, anywhere from seconds to minutes. Temp.EDIT: Judging from your post in the Dropbox thread, you might be writing your file to your Dropbox folder, which I hadn't realised. Optional NVM Commands (0x005f): Comp Wr_Unc DS_Mngmt Wr_Zero Sav/Sel_Feat *Other* Optional Admin Commands (0x0037): Security Format Frmw_DL *Other* Local Time is: Sun Nov 17 01:01:26 2019 GMTįirmware Updates (0x16): 3 Slots, no Reset required Smartctl 6.6 r4324 (local build)Ĭopyright (C) 2002-16, Bruce Allen, Christian Franke, = START OF INFORMATION SECTION = running fsck -f /dev/nvme0n1p5 from a live disk returns no errors, exit code = 0.Using a live disk, Disks check says that the partition is undamaged.running sudo touch /forcefsck sudo shutdown -r now did not visibly check the disk.Disks cannot repair the boot disk (it's busy) need a live usb stick.Ubuntu installed on an Ext4 partition (dual boot with windows).Is this a known risk just from power-cycling ubuntu?.How would I know if this is a hardware issue?.Is this file-system fatally corrupted and unsafe to use?.This applies to at least 5 known cases including text files, a system file and files deep in a. The machine started fine but I have found some of the recently edited files are now empty. I tried to reboot an 18.04 LTS machine but it didn't respond so after waiting some minutes I power-cycled the machine.